Optimizing GenAI and Agentic AI: Balancing Cost, Quality, and Latency in Production

Building production-ready GenAI and Agentic systems is no longer about who can write the best prompt. It has become a complex engineering challenge of balancing the “Iron Triangle”: Cost, Quality, and Latency.

As we move from single-prompt chatbots to autonomous agents that can call tools and reason through multi-step tasks, the overhead grows exponentially. If you do not optimize for these three pillars early, your application will either be too slow for users, too expensive to maintain, or too unreliable for the enterprise.

Deploying a GenAI system in production is not a software problem anymore. It is an economics problem dressed up in a transformer architecture. Every token you process costs money, every guardrail adds latency, and every shortcut risk quality. This piece is a practical map of that territory — where the landmines are, and how engineers who have already stepped on a few of them learned to navigate smarter.

Section 1 — The Cost of Intelligence: A Breakdown

To optimize, you first need to know where the money is going. Many teams focus solely on the token price of the Large Language Model (LLM), but the true cost structure is more nuanced.

Dissecting the Real Cost of a GenAI Request: Most engineering teams look at their model inference bill and think that is the whole cost. It is not even half the story. Production AI systems bleed money at four distinct points, and only one of them is the model itself.

Model Inference Costs

This is the most visible cost. Frontier models like GPT-4o or Claude 3.5 Sonnet offer high reasoning capabilities but come with a premium price tag. In agentic workflows where an agent might “think” through five steps before answering, inference costs can skyrocket.

Embedding and Vector Database Costs

Every time you ingest data or perform a RAG (Retrieval-Augmented Generation) query, you incur embedding costs. Furthermore, hosting a vector database involves compute and storage fees that scale with the dimensionality of your vectors and the frequency of your read/write operations.

Wasted Compute

This is the “silent killer” of ROI. Wasted compute occurs when an agent enters a hallucination loop, performs unnecessary tool calls, or generates a long response that is eventually blocked by an output guardrail. Every token generated but discarded is money burned.

The Hidden Drain: Wasted compute is the most preventable cost and the least monitored. When an agent hits a context limit at message 50 and times out, you have paid for every token in that conversation and received nothing in return. When a tool call fails silently and gets retried, you pay twice. Instrument this metric before you optimize anything else.

Section 2 — Optimizing the Architecture Before Touching the Model

The most expensive model call is the one you never had to make. Before you think about which model to use, build the infrastructure that prevents unnecessary calls entirely.

Moving from a “naive” AI setup to an optimized one requires several layers of architectural intervention.

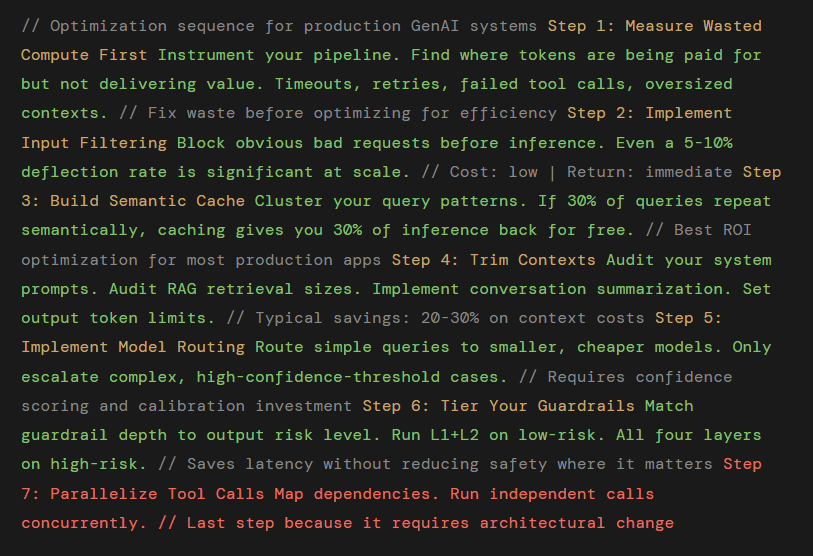

1. Input Filtering: Kill Bad Requests at the Gate

Not every message deserves a model inference. Input filters are lightweight classifiers or rule-based systems that run in milliseconds and cost fractions of a cent. They catch out-of-scope queries, detect language the system does not support, and flag requests that will fail downstream anyway. A simple classifier that deflects 15% of incoming traffic can cut your monthly inference bill noticeably without any visible change to user experience.

2. Semantic Caching: The Underrated Cost Killer

In many applications, 80 percent of users ask the same 20 percent of questions. Instead of hitting the LLM every time, implement a semantic cache. By using vector similarity, you can identify if a new question is semantically identical to a previously answered one. If the similarity score is high enough (e.g., 0.95), you serve the cached response instantly at near-zero cost.

Exact-match caching is obvious. Semantic caching is the insight that most production applications do not have infinite query diversity. Users cluster. In any high-volume B2C application, a surprisingly large fraction of questions map to a small number of intent clusters.

Real-World Data Point One banking chatbot found that 30% of all user questions fell into just 100 intent clusters. By implementing semantic caching with a similarity threshold, they cut inference costs by half — not by making the model faster or cheaper, but by asking it less often.

A complete multi-level caching strategy looks like this:

3. Context Optimization: Stop Feeding the Model Junk

The more context you provide, the higher the cost and the slower the “Time to First Token.” Use techniques like reranking to ensure you are only sending the most relevant chunks to the model. Avoid “context stuffing” where you provide 50 documents when only 2 are needed.

Context length is the most direct lever on inference cost. Every token in the context window is a token you pay for. Bloated contexts come from three sources: conversation history that is not pruned, RAG retrieval that returns too many documents, and system prompts that were never refactored after prototyping.

Implement a context budget per request. Allocate token quotas to system prompt, conversation history, retrieved context, and the actual user query. When history exceeds its budget, summarize older turns rather than truncating them (truncation kills coherence; summarization preserves it at lower cost).

4. Response Limits: Define What Enough Looks Like

Enforce strict token limits on responses to prevent the model from “rambling,” which saves both cost and time. Models will write essays if you let them. In most production use cases, long responses are not better responses. Set max token limits on outputs and instruct models explicitly to be concise. A 200-token answer that solves the problem beats a 1,000-token answer that also solves the problem. You pay for the difference.

Section 03 — Intelligent Model Routing: The Cascade Strategy

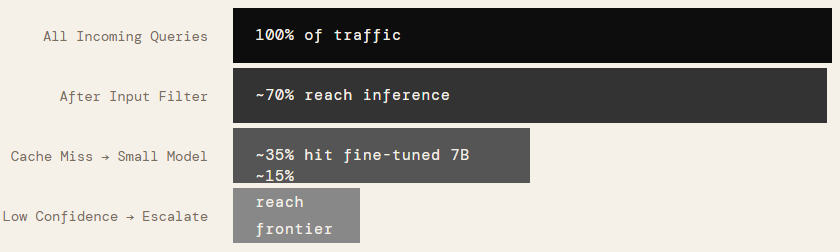

A single model serving all traffic is the most common architecture and the most expensive one. Production systems that have cracked cost efficiency almost universally route requests dynamically based on complexity.

The cascade works because query complexity follows a power law. The majority of requests in any application are routine. They do not need a frontier model with 200 billion parameters. A fine-tuned 7B model, cheap and fast, handles them well. Only when confidence scores fall below a threshold does the system escalate to the expensive model. The result: frontier model capability is available when it matters, and you are not paying frontier model prices for every “what are your business hours?” query.

Section 4 — The Guardrail Trade-off: Quality vs. Latency

Guardrails are essential for safety and reliability, but they are not free. Each layer of validation adds another round of computation, increasing latency. The goal is to build a “leveled” defense system.

Guardrails protect quality. They also add latency and cost. This is not a problem to solve — it is a trade-off to manage deliberately. The key insight is that guardrails should be layered, and each layer should only activate if the previous one passed.

Layer 1: Input Guardrails — Catch Problems Before Spending Money

Block prompt injections, toxic content, PII leakage, out-of-scope requests, and language mismatches before the request touches any model. This layer runs on pattern matching and lightweight classifiers. It costs almost nothing and adds under 5ms of latency. Catching a bad request here costs 0.001x what catching it at layer 4 costs.

Layer 2: Tool Guardrails — Validate Before You Damage

In Agentic AI, agents interact with the real world via APIs. Tool guardrails validate parameters before a tool is executed.

For agentic systems, tool calls are where errors become consequential. Before executing any tool: validate parameter types and ranges, check authorization scope, block destructive operations (DELETE commands, bulk writes) unless explicitly approved, and confirm the tool call makes sense given the agent’s current goal. An agent that calls the wrong tool wastes money; one that executes a DELETE on the wrong table causes incidents.

Layer 3: Reasoning Guardrails — Catch Drift Mid-Thought

These ensure the agent stays on track. Does the agent’s plan make sense? Is it stuck in a loop? By checking the “Chain of Thought,” you can intervene before an agent spends two minutes performing incorrect tasks.

In multi-step agentic workflows, the agent can reason itself into a corner — hallucinating intermediate steps, pursuing a goal that has drifted from the original intent, or constructing a chain-of-thought that is internally consistent but factually wrong. Reasoning guardrails sample intermediate steps and verify logical consistency. They add meaningful latency but prevent costly end-to-end failures in long agentic chains.

Layer 4: Output Guardrails — Final Quality Gate Before Delivery

Check final outputs for factual grounding against retrieved context, ensure no PII slipped through in generated text, verify tone and compliance with brand guidelines, and catch hallucinations using a secondary verification model. This layer is the most expensive per request — run it selectively based on response type and risk level.

The Guardrail Trade-off Formula: Run all four layers on high-stakes outputs (financial advice, medical information, legal content). For low-stakes outputs (FAQs, simple lookups), Layer 1 and Layer 2 are usually sufficient. Tiering your guardrail activation by output risk level can reduce average latency overhead by 40–60% without sacrificing quality where it matters.

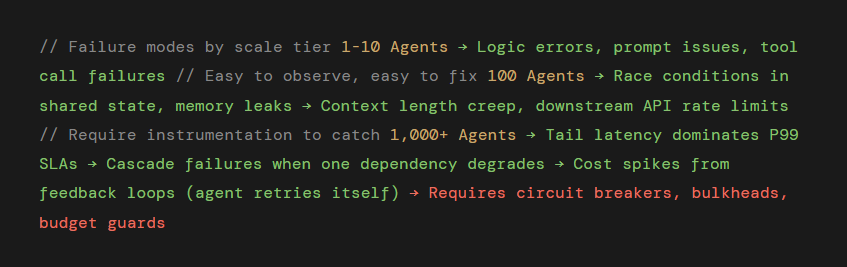

Section 5 — Scaling Agentic Systems: Performance and Reliability-From One Agent to Thousands

Scaling from one agent to thousands introduces a unique set of challenges, particularly regarding memory and model selection. A single agent working well in testing is a data point. Ten agents working well is a coincidence. A thousand agents working reliably in production is an engineering discipline. Scaling agentic systems introduces failure modes that simply do not exist at small scale.

The Memory Problem

Here is a scenario that plays out constantly in early production deployments. You build a customer service agent. It handles 50-message conversations flawlessly in testing. You deploy it. Real users have 200-message conversations. The agent starts timing out because the full conversation history no longer fits in the context window. You paid for every token in that conversation and got nothing in return.

The fix is a proper memory architecture. Conversations should not live entirely in the context window. Implement episodic memory that summarizes and archives older turns, working memory that holds only what is immediately relevant, and persistent memory stored in an external database for user-specific facts that should survive across sessions.

Working Memory: The active context window. Keep this lean and focused. Contains the current task, immediate conversation turns and retrieved context. Budget: 20–30% of max context.

Episodic Memory: Summarized conversation history. When the active context fills up, compress older turns into concise summaries and archive them. Retrieve on demand when the agent needs to reference earlier conversation.

Semantic Memory: Domain knowledge and facts stored in a vector database. Retrieved via similarity search when relevant. This scales independently of conversation length.

Persistent Memory: User preferences, history, and profile data stored in a traditional database. Inject selectively into context only when relevant to the current query.

Parallelism vs. Sequential Execution

Sequential agent pipelines are the latency killer nobody talks about enough. If your agent calls five tools one after another, you accumulate five round-trip latencies. Map your tool dependencies. Any tool calls that do not depend on each other’s output can run in parallel. In most real agentic workflows, 30–50% of tool calls can be parallelized, often cutting end-to-end latency in half.

The Multi-Model Tiering Strategy

One of the most effective ways to optimize scale is to stop using the most expensive model for everything.

- The Small Model First: Route simple queries or initial classifications to a fine-tuned 7B parameter model. These are incredibly cheap and fast.

- Escalation: If the small model reports low confidence or the task is flagged as complex, escalate the request to a frontier model like GPT-4o.

By handling 80 percent of queries with a “small” model and only 20 percent with the “expensive” model, you can reduce costs by orders of magnitude while maintaining high quality for difficult tasks.

Reliability Through Multi-Level Caching: The Failure Modes Change

At single-agent scale, failures are obvious. At thousand-agent scale, they are statistical. Some specific failure patterns that emerge at high concurrency:

Budget guards are underused in agentic systems. A budget guard is a hard limit on tokens, tool calls, or dollars per task per agent instance. When an agent hits the limit, it fails gracefully rather than running until it either succeeds expensively or hits a timeout catastrophically. Set budget guards in every production agentic system. Without them, a single misbehaving agent in a looping failure state can consume hundreds of dollars before anyone notices.

Beyond simple prompt-response caching, consider a deeper strategy:

- Exact Match Cache: For identical prompts.

- Tool Result Cache: If an agent needs to know the weather in New York five times in one hour, do not call the API five times. Cache the tool output.

- KV Caching: Optimize the model’s internal state for long-form generations to reduce the time spent re-processing the same prefix.

Section 06 — The Practical Optimization Playbook

The following sequence represents the order of operations for optimizing a GenAI system that is already in production. Not every system needs every step, but the sequence matters. Optimize in this order.

The Monitoring Non-Negotiables: None of these optimizations work without telemetry. Instrument: cost per query by model tier, cache hit rate by cache level, guardrail trigger rate by layer, p50 / p95 / p99 latency, wasted compute percentage, and agent budget utilization. If you are not measuring it, you cannot optimize it and you definitely cannot explain an unexpected cost spike to your finance team at 9 AM on a Monday.

Section 07 — Closing: The Trade-off Is Not a Bug

The iron triangle of cost, quality, and speed in GenAI is not a problem that will be engineered away. It is a fundamental characteristic of the technology. The teams building production AI systems that scale reliably and economically are not the ones who found a way around the triangle. They are the ones who learned to make deliberate, informed choices about where on the triangle to sit — and built the infrastructure to move that position dynamically based on the nature of each individual request.

Cheap for the simple. Expensive for the complex. Fast everywhere the context allows. Safe where the stakes demand it. That is not a compromise. That is an architecture.

Practical Optimization Checklist

If you are building GenAI or agentic AI in production, ask:

- Are we sending unnecessary tokens?

- Are we caching aggressively enough?

- Do we really need the largest model for every query?

- Are guardrails placed before expensive inference?

- Do we summarize conversations instead of replaying everything?

- Are tool calls bounded and validated?

- Are we measuring cost per business outcome, not just per request?

Final Thought

The future of GenAI is not about building bigger agents. It is about building disciplined systems.

The winning teams will not be those who use the most powerful model. They will be those who:

- Route intelligently

- Cache aggressively

- Guard early

- Constrain context

- Measure everything

Cost, quality, and latency are not enemies. They are design constraints.

If you treat them as first-class engineering parameters from day one, your GenAI system will scale. If you ignore them, scale will expose every hidden weakness.

That is the real difference between a demo and a durable AI product.

Conclusion

Optimizing GenAI is no longer a luxury; it is a requirement for production. The “perfect” system is one that uses the smallest possible model for the task, implements rigorous guardrails without suffocating the user experience, and employs smart caching to avoid redundant work. By focusing on these architectural layers, you can build agentic systems that are not just intelligent, but also sustainable and fast.

#AgenixAI #AjayVermaBlog #GenerativeAI #AIEngineering #AIOperations #LLMOptimization #AICostOptimization #AIScalability #AIArchitecture #ProductionAI #MLOps #RAG #SemanticCaching #AIInfrastructure #ResponsibleAI #EnterpriseAI

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment