From Static Rules to Learning Agents: How Reinforcement Learning is Rewiring AI Architecture

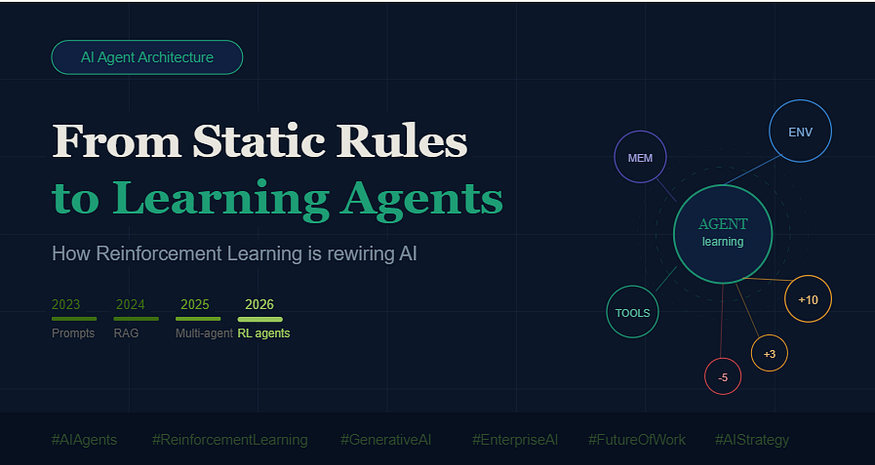

The way we build AI systems has followed a fairly predictable arc over the last three years. In 2023, the conversation was dominated by prompt engineering. In 2024, everyone was building RAG pipelines. By 2025, multi-agent systems had become the architecture of choice for serious enterprise teams. In 2026, the paradigm is shifting again, and this one is different in a more fundamental way.

We are moving from agents that follow instructions to agents that learn from outcomes. That is not an incremental improvement. It is a different approach to intelligence itself.

The Problem With Every AI Architecture Before This One

Every major AI architecture before reinforcement learning has shared a common limitation: someone, somewhere, had to tell the system how to behave. A prompt engineer scripted the reasoning. A RAG pipeline developer curated the retrieval logic. Even multi-agent orchestration required humans to design the workflow, define the handoffs, and specify what success looked like.

These systems are sophisticated. They are also fundamentally static. The logic is baked in at build time. When the environment changes, which it always does, the system cannot adapt on its own. It does exactly what it was told to do, and nothing more.

This is a real constraint for organizations trying to deploy AI in dynamic, high-variability environments. Customer interactions do not follow scripts. Business processes shift. Data changes shape. A system that cannot update its own behavior is a system that needs constant human maintenance.

What Reinforcement Learning Actually Does

Reinforcement learning introduces a completely different paradigm. Instead of telling the agent how to behave, the system learns what works through direct experience.

The mechanism is straightforward in principle, even if complex in implementation. The agent takes an action. The environment responds with feedback, a reward if the action moved toward a goal, a penalty if it did not. Over many iterations, the agent develops a policy, a set of learned preferences about which actions to take in which contexts. It is not following a rule. It is following a pattern it discovered through accumulated experience.

In practice, this means an agent using RL might discover that a particular tool produces faster, more accurate results for certain query types. It might learn that a specific reasoning path yields better outcomes when handling ambiguous inputs. It might develop a preference for conservative actions in low-confidence scenarios. None of this was explicitly programmed. It emerged from the reward signal.

For enterprise AI teams, the implications are significant. An RL-equipped agent does not just execute a process. It optimizes one.

The Architecture That Makes It Real

The most capable AI agents emerging now are not single-model systems. They are stacks. A useful mental model for where reinforcement learning fits looks like this: an LLM reasoning layer handles language understanding and generation. A tool ecosystem gives the agent access to external systems, APIs, and data sources. A memory layer maintains context across sessions and tasks. And a reinforcement learning policy sits across all of it, adjusting how the agent uses each of those components based on what has worked before.

The RL policy is the adaptive layer. It does not replace the LLM or the tools. It governs how they are used. Think of it as the agent developing judgment over time, rather than executing judgment that was pre-specified at design time.

This is also why the shift to learning agents is harder to replicate than previous architectural shifts. RAG pipelines are relatively commoditized now. A well-configured multi-agent system takes engineering, but the patterns are established. A well-trained RL policy requires sustained feedback loops, careful reward design, and a production environment rich enough to generate meaningful signal. That is a higher bar, and a more defensible one.

Why Reward Design Is the New Prompt Engineering

If prompt engineering was the skill that defined 2023, reward design may be the defining skill of the next two years. How you structure the reward signal determines what the agent optimizes for, and the gap between what you measure and what you actually care about is where most RL systems go wrong.

Reward the agent for completing tasks quickly, and it will find shortcuts that sacrifice accuracy. Reward it purely for correctness, and it may over-invest in verification steps that create latency. The most robust reward structures encode multiple objectives simultaneously, task completion, efficiency, accuracy, and appropriate escalation to human oversight, and balance them carefully.

This is not a technical problem alone. It is a strategy problem. Organizations need to be precise about what they want their AI agents to optimize for, at the task level and at the business level. A misaligned reward structure compounds over time as the agent gets better at chasing the wrong goal.

What This Means for Enterprise AI Strategy

The shift toward learning agents does not make earlier AI architectures obsolete. RAG will remain the right tool for knowledge retrieval. Multi-agent orchestration will remain the right architecture for complex, decomposable workflows. What RL adds is adaptability at the policy layer.

The organizations best positioned to benefit from this shift are those that have already built robust feedback loops into their AI systems. If you have been measuring AI agent performance consistently, logging decisions and outcomes, and maintaining clean interaction data, you have the raw material for RL training. If you have not, the gap is not insurmountable, but it is real.

Structured AI implementation that has been oriented around metrics and outcomes from the start will translate more naturally into RL-ready architecture than systems built purely around throughput.

The Competitive Reality

Autonomous, self-improving AI systems were a research concept three years ago. They are an engineering reality today. The organizations that understand this shift earliest, and invest in the infrastructure to support it, will have agents that improve continuously in production rather than degrading or plateauing.

The question for leadership teams is not whether reinforcement learning matters. The question is whether your current AI investment strategy is building toward this, or building away from it. Systems designed purely around static workflows will require significant rearchitecting as RL-powered competitors pull ahead.

AI strategy alignment has always required thinking multiple architectural generations ahead. The teams doing that work now will not be surprised by what learning agents make possible. Everyone else will spend the next two years catching up.

The next generation of AI systems will not just generate answers. They will learn how to get better answers. That is a fundamentally different kind of AI, and it is already being built.

What Reinforcement Learning Brings to the Table

Traditional AI systems are largely static. Even when powered by large language models, they follow patterns learned during training and respond based on prompts.

Reinforcement Learning introduces a feedback loop.

An agent interacts with an environment, takes actions, and receives signals in the form of rewards or penalties. These signals guide future decisions.

For example:

- A correct response can be rewarded

- Efficient use of tools can earn additional reward

- Incorrect or irrelevant outputs can be penalized

Over time, the agent learns to choose actions that maximize long-term reward rather than short-term correctness.

This is not just optimization. It is behavioral learning.

From Instructions to Experience

The biggest shift is philosophical.

Earlier systems were designed around instructions. Developers defined workflows, rules, and logic. The AI followed them.

With Reinforcement Learning, the system starts discovering what works.

This leads to:

- Better tool usage without explicit programming

- Improved reasoning sequences based on past success

- Adaptive decision-making that evolves over time

Instead of hardcoding intelligence, we allow intelligence to emerge.

Why This Matters for AI Agents

AI agents are not just models. They are systems that:

- Plan tasks

- Use tools

- Maintain memory

- Interact with dynamic environments

In such systems, static logic quickly becomes a limitation.

Consider a customer support agent. A rule-based system may follow predefined scripts. A learning agent, however, can:

- Identify which responses resolve issues faster

- Learn when to escalate or automate

- Optimize interaction flow based on outcomes

This creates systems that improve with usage, similar to how humans learn from experience.

The New AI Architecture

Future AI agents will not rely on a single component. Instead, they will be built as layered systems:

- A reasoning layer powered by large language models

- A tool ecosystem that connects APIs, databases, and applications

- Memory systems that retain past interactions and context

- A reinforcement learning layer that continuously refines decisions

The reinforcement learning layer acts as the decision optimizer. It connects outcomes with actions and drives continuous improvement.

Challenges to Consider

While the promise is strong, implementing reinforcement learning in real-world AI systems is not trivial.

Some key challenges include:

- Designing meaningful reward functions

- Avoiding unintended behaviors due to poorly defined incentives

- Balancing exploration and exploitation

- Managing cost and latency in learning loops

If rewards are not aligned with business goals, agents may optimize the wrong behavior. This is a critical area where domain expertise becomes essential.

The Road Ahead

We are moving from systems that respond to systems that learn.

The next generation of AI will not be defined by how well it answers a question, but by how effectively it improves over time.

This opens up possibilities such as:

- Self-optimizing enterprise workflows

- Autonomous research agents

- Adaptive decision systems in healthcare, finance, and operations

Reinforcement Learning may not replace existing approaches like prompting or retrieval. Instead, it will sit on top of them, turning static intelligence into dynamic capability.

Final Thought

AI is no longer just about generating outputs. It is about making decisions, learning from outcomes, and continuously improving.

Reinforcement Learning is the layer that brings this transformation to life.

The real question is no longer how to build smarter models.

It is how to build systems that can learn to become smarter on their own.

#AI #ArtificialIntelligence #MachineLearning #Technology #Innovation #AIAgents #ReinforcementLearning #GenerativeAI #EnterpriseAI #AIStrategy #AILeadership #LLM #AIImplementation #AIAutomation #AgenticAI #CXOInsights #AIArchitecture #AgenixAI #AjayVermaBlog

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment