Understanding and Handling Errors in LLM/GenAI Applications: A Comprehensive Guide

Building applications with Large Language Models (LLMs) and Generative AI brings incredible opportunities, but it also introduces unique challenges in error handling. As these systems become increasingly integrated into production environments, understanding and gracefully managing errors is crucial for building robust, reliable applications.

In this comprehensive guide, we’ll explore the various types of errors you might encounter when working with LLM/GenAI applications, what they mean, and most importantly, how to handle them effectively.

Why Error Handling Matters in GenAI Applications

Unlike traditional software where errors are often deterministic, LLM-based applications introduce additional layers of complexity:

- External API dependencies with their own failure modes

- Resource constraints and quota limitations

- Non-deterministic outputs that can occasionally fail validation

- Network and infrastructure challenges at scale

- Model-specific limitations and edge cases

Proper error handling ensures your application remains resilient, provides good user experience, and maintains operational efficiency even when things go wrong.

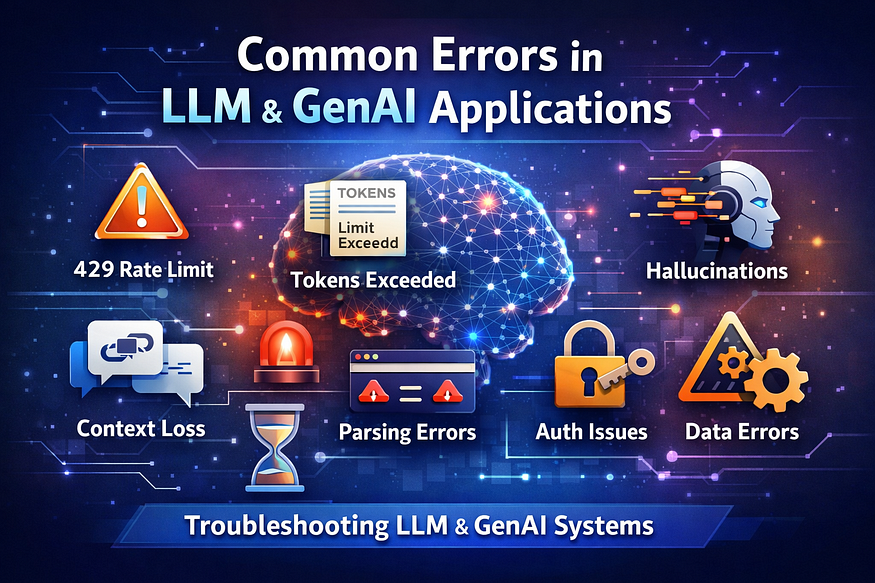

Common Error Types in LLM/GenAI Applications

Output Errors: Hallucinations occur when models generate plausible but factually incorrect information, often due to gaps in training data or overgeneralization. Factual errors, like mixing up historical dates, and faithfulness issues — where the model ignores provided context in RAG pipelines — fall here too. Handle them with clear prompts, retrieval-augmented generation (RAG) for grounding, output validation against trusted sources, and fine-tuning on domain-specific data.

Retrieval Errors: In RAG setups, retrieval failures happen when irrelevant or incomplete documents are fetched, leading to poor responses. This stems from weak embeddings, poor chunking, or index mismatches. Mitigate by optimizing vector databases with hybrid search (semantic + keyword), reranking results, and evaluating retrieval metrics like recall@K.

Rate Limiting Errors: A 429 ResourceExhausted (or RateLimitError) signals quota exhaustion, like exceeding requests per minute or free-tier limits on APIs such as Gemini. It means “too many requests” even on paid plans, often with messages like “check your plan and billing”. Implement exponential backoff retries (e.g., wait 1s, then 2s, 4s), queue requests, upgrade quotas, or distribute across regions/models.

Context Errors: Scope confusion arises when responses are too broad or narrow for the query, while temporal logic errors mix timelines or cause-effect. Fixed context windows (e.g., 128K tokens) cause truncation, losing early details. Use dynamic prompting for scope, chain-of-thought for logic, and summarization or sliding windows for long contexts.

Infrastructure Errors: Timeouts and parsing failures, like 429 in streaming multimodal requests, overload servers with large inputs (e.g., videos). Client-side issues include malformed API calls. Add request batching, async processing, error logging (e.g., Sentry), and monitoring tools like LangSmith for traces.

1. Rate Limiting & Quota Errors (HTTP 429)

What it means: Rate limiting errors occur when your application exceeds the allowed number of API requests within a specific time window. This is one of the most common errors developers encounter.

- Throughput Limits: You have exceeded your allowed Requests Per Minute (RPM) or Tokens Per Minute (TPM).

- Financial Quotas: You have hit your hard billing cap, or your prepaid credits have run out (e.g., “You exceeded your current quota, please check your plan and billing details”).

Common manifestations:

429 Too Many RequestsRateLimitError: You exceeded your current quotaRate limit reached for requests /Exceeding token usage limits- 429 ResourceExhausted

- RateLimitError: You exceeded your current quota, please check your plan and billing details.

- High concurrency in production systems

- Multiple agents or workflows hitting the API simultaneously

How to handle:

import time

import random

def exponential_backoff_retry(func, max_retries=5):

for attempt in range(max_retries):

try:

return func()

except RateLimitError as e:

if attempt == max_retries - 1:

raise

# Exponential backoff with jitter

wait_time = (2 ** attempt) + random.uniform(0, 1)

time.sleep(wait_time)

return NoneBest practices:

- Implement Exponential Backoff: Never fail immediately on a throughput 429. Use a retry mechanism with exponential backoff (libraries like Python’s tenacity are great for this). Wait 2 seconds, then 4, then 8, before finally failing.

- Queueing Systems: If you are processing batch jobs (like summarizing hundreds of documents), push tasks into a message queue (e.g., RabbitMQ, Celery) and consume them at a rate slightly below your TPM/RPM limits.

- Billing Alerts: For hard quota limits, code-level retries won’t work. Set up automated billing alerts in your LLM provider’s dashboard to notify your DevOps team before you run out of credits.

- Implement exponential backoff with jitter

- Use request queuing and throttling

- Monitor your usage patterns

- Consider upgrading your plan if consistently hitting limits

- Cache responses when possible, to reduce redundant calls

2. Authentication and Authorization Errors (HTTP 401/403)

What it means: These errors indicate problems with your API credentials or permissions.

Common manifestations:

401 Unauthorized: Invalid API key403 Forbidden: Access denied to this resourceAuthenticationError: Incorrect API key provided- Expired API keys

- Incorrect environment configuration

- Permission restrictions

How to handle:

class APIKeyManager:

def __init__(self):

self.primary_key = os.getenv('PRIMARY_API_KEY')

self.fallback_key = os.getenv('FALLBACK_API_KEY')

def get_valid_key(self):

try:

# Test primary key

test_request(self.primary_key)

return self.primary_key

except AuthenticationError:

# Fallback to secondary

logger.warning("Primary API key failed, using fallback")

return self.fallback_keyBest practices:

- Store API keys securely (environment variables, secret managers)

- Implement key rotation mechanisms

- Use different keys for different environments

- Monitor key usage and expiration

- Secure key management using environment variables

- Use secret managers

- Implement key rotation policies

Proper credential management is part of secure AI system deployment.

3. Token Limit / The Context Window Exceeded Errors

What it means: LLMs have maximum context windows and exceeding these limits results in token errors. Every LLM has a maximum context window (e.g., 8K, 128K, or 1M tokens). If the sum of your system prompt, conversation history, and user input exceeds this limit, the model will reject the request. Models cannot process “half” a prompt; they require the entire context to fit within the limit. The model forgets earlier parts of the conversation or produces inconsistent responses.

Common manifestations:

- Common Codes: 400 Bad Request, ContextLengthExceeded, TokenLimitError

InvalidRequestError: Maximum context length exceededToken limit reached: Input too longContext window overflow- Long conversations exceed context window

- Important information dropped during summarization

- Improper memory management in agent frameworks

How to handle:

def smart_truncate(text, max_tokens, model="gpt-4"):

"""Intelligently truncate text to fit within token limits"""

# Count tokens (using tiktoken or similar)

token_count = count_tokens(text, model)

if token_count <= max_tokens:

return text

# Implement sliding window or summarization

if token_count > max_tokens * 2:

# For very long texts, use summarization

return summarize_text(text, max_tokens)

else:

# For moderately long texts, use sliding window

return sliding_window_truncate(text, max_tokens)Best practices:

- Pre-flight Token Counting: Never guess the length of your prompt. Use tokenizers like tiktoken (for OpenAI models) to count tokens before making the API call.

- Implement RAG: Instead of stuffing entire databases into a prompt, use Retrieval-Augmented Generation (RAG) to fetch and inject only the top K most relevant chunks of data.

- Sliding Window & Summarization: For long-running chatbots, implement a rolling conversation window (e.g., only keep the last 10 messages) or have a background task summarize older messages to save token space.

- Implement intelligent truncation strategies

- Use streaming for long responses

- Consider chunking and processing in segments

- Use structured memory systems

- Maintain conversation summaries

- Store key facts in vector databases

Agent-based systems often require a memory management layer to maintain context effectively.

4. Model Availability Errors (HTTP 503) / Server Overload

What it means: The model or service is temporarily unavailable due to maintenance, overload, or other issues. Generating tokens is computationally expensive. During peak hours, LLM providers’ servers can become overwhelmed. A 503 means the server is too busy, while a 504 usually means the generation took so long that the HTTP connection timed out.

Common manifestations:

- Common Codes: 500 Internal Server Error, 502 Bad Gateway, 503 Service Unavailable, 504 Gateway Timeout

ModelNotAvailable: The model is currently offlineServiceUnavailableError: System overloaded

How to handle:

class ModelFailover:

def __init__(self):

self.models = [

{'name': 'gpt-4', 'endpoint': 'primary'},

{'name': 'gpt-3.5-turbo', 'endpoint': 'primary'},

{'name': 'claude-3', 'endpoint': 'secondary'}

]

async def get_completion(self, prompt):

for model in self.models:

try:

return await call_model(model, prompt)

except ServiceUnavailableError:

logger.info(f"Model {model['name']} unavailable, trying next")

continue

raise Exception("All models unavailable")Best practices:

- Multi-Model Fallback Routing: This is the gold standard for enterprise GenAI. If your primary model (e.g., GPT-4o) throws a 500-level error, your code should automatically route the request to a secondary provider (e.g., Claude 3.5 Sonnet or a self-hosted Llama 3 model).

- Enable Streaming: Instead of waiting 30 seconds for a massive block of text (which risks a timeout), stream the response token-by-token. This keeps the connection alive and vastly improves perceived latency for the user.

- Implement failover to alternative models

- Use circuit breaker patterns

- Queue requests for retry during downtime

- Monitor service status pages

5. Timeout Errors

What it means: The request took too long to process and was terminated. LLM responses sometimes take longer than expected. The request exceeds the allowed response time and the system terminates the call.

Common manifestations:

TimeoutError: Request timeout after 60 secondsReadTimeoutError: Server did not respond in timeGateway Timeout (504)- Large prompts

- High system load

- Slow downstream tools in agent pipelines

How to handle:

async def handle_with_timeout(request, timeout=30):

try:

return await asyncio.wait_for(

make_llm_request(request),

timeout=timeout

)

except asyncio.TimeoutError:

# Try with reduced complexity

simplified_request = simplify_prompt(request)

return await make_llm_request(simplified_request)Best practices:

- Set appropriate timeout values

- Implement progressive degradation

- Use async/await for better concurrency

- Consider streaming responses for long-running operations

- Optimize prompt size

- Increase timeout threshold

- Use asynchronous processing

- Implement response streaming

Timeout management is critical for real-time applications such as chatbots and AI copilots.

6. Content Policy Violations / Safety and Content Moderation Errors

What it means: The input or output violates the AI provider’s content policies. Major LLM providers (Google, OpenAI, Anthropic) have strict safety guardrails. If a user inputs a prompt containing hate speech, self-harm, or PII, the provider’s moderation endpoint will intercept it and throw an error. Sometimes, the error happens during generation if the model realizes its output is violating policy.

Common manifestations:

- Common Codes: 400 Bad Request, ContentPolicyViolation, FinishReason: Content_Filter

ContentPolicyViolation: Request rejected due to policyModerationError: Content flagged as inappropriateSafetyFilterTriggered

How to handle:

class ContentModerator:

def pre_moderate(self, content):

"""Check content before sending to LLM"""

# Basic keyword filtering

if self.contains_blocked_terms(content):

return False, "Content contains prohibited terms"

# Use moderation API

moderation_result = self.moderation_api.check(content)

if moderation_result.flagged:

return False, moderation_result.reason

return True, None

def handle_policy_error(self, error):

"""Gracefully handle policy violations"""

logger.warning(f"Content policy violation: {error}")

# Return safe fallback response

return {

'response': "I cannot process this request as it may violate content policies.",

'error_type': 'content_policy',

'suggestion': "Please rephrase your request"

}Best practices:

- Graceful User Feedback: Catch these specific exceptions and return a polite, neutral message to the user: “I’m sorry, I cannot generate a response to this prompt as it violates safety guidelines.”

- Pre-Moderation: Pass user inputs through a dedicated moderation endpoint (like OpenAI’s free Moderation API) before sending it to the heavy, expensive LLM.

- Prompt Engineering: Add guardrails to your system prompt instructing the model on how to politely decline inappropriate requests rather than triggering an abrupt API failure.

- Pre-moderate content before sending

- Implement fallback responses

- Log violations for analysis

- Educate users about content policies

7. Invalid Request Format Errors (HTTP 400) / Format and Parsing Failures (The Silent Error)

What it means: The request structure or parameters are incorrect. Unlike the network errors above, this happens when the LLM API returns a 200 OK, but the content breaks your application. You asked the LLM to return a JSON object, but it returned the JSON wrapped in Markdown (json …), or it hallucinated a key, causing your application’s parser to crash.

Common manifestations:

- Common Codes: JSONDecodeError, ValidationError (Application-side)

400 Bad Request: Invalid JSONInvalidRequestError: Missing required parameterValidationError: Invalid model specified- Invalid JSON

- Missing fields

- Incorrect schema

How to handle:

from pydantic import BaseModel, validator

class LLMRequest(BaseModel):

prompt: str

model: str

temperature: float = 0.7

@validator('temperature')

def validate_temperature(cls, v):

if not 0 <= v <= 2:

raise ValueError('Temperature must be between 0 and 2')

return v

@validator('model')

def validate_model(cls, v):

valid_models = ['gpt-4', 'gpt-3.5-turbo', 'claude-3']

if v not in valid_models:

raise ValueError(f'Model must be one of {valid_models}')

return vBest practices:

- Use Structured Outputs/JSON Mode: Always utilize the provider’s native JSON mode or Structured Outputs features, which force the model to adhere strictly to a JSON schema schema validation (Pydantic, JSON Schema)

- Output Parsers & Auto-Correction: Use frameworks like LangChain or LlamaIndex that feature robust output parsers (often backed by Pydantic). If parsing fails, these frameworks can automatically catch the error, send the bad output back to the LLM, and say: “You formatted this wrong. Fix the JSON format.”

- Validate inputs before sending requests

- Provide clear error messages

- Implement request builders/factories

- Apply schema validation

- Use function calling or tool-based responses

- Implement post-processing parsers

This is common in automation workflows where LLM outputs are consumed by downstream systems.

8. Network and Connectivity Errors

What it means: Network-level issues preventing communication with the API.

Common manifestations:

ConnectionError: Failed to establish connectionDNSError: Could not resolve hostnameSSLError: Certificate verification failed

How to handle:

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_resilient_session():

session = requests.Session()

retry_strategy = Retry(

total=3,

status_forcelist=[408, 429, 500, 502, 503, 504],

method_whitelist=["HEAD", "GET", "OPTIONS", "POST"],

backoff_factor=1

)

adapter = HTTPAdapter(max_retries=retry_strategy)

session.mount("http://", adapter)

session.mount("https://", adapter)

return sessionBest practices:

- Implement connection pooling

- Use retry strategies with backoff

- Monitor network latency

- Have fallback endpoints ready

9. Hallucination Errors

Hallucination is not a system error but a model behavior issue.

Meaning

The model generates information that appears correct but is actually incorrect or fabricated.

For example, the model may invent research papers, statistics, or citations that do not exist.

Why it happens

- Insufficient context in the prompt

- Weak grounding in real data

- Over-generalization by the model

How to handle it

- Use Retrieval Augmented Generation (RAG)

- Provide verified data sources

- Add grounding constraints in prompts

- Implement output validation layers

Many production systems include fact-checking pipelines or knowledge retrieval systems to reduce hallucinations.

10. Data Quality Errors

Generative AI systems rely heavily on data quality.

Meaning

Incorrect outputs occur because of poor input data.

Examples include:

- Incomplete documents

- Noisy datasets

- Incorrect embeddings in vector databases

How to handle it

- Implement data validation pipelines

- Clean and normalize input data

- Monitor retrieval quality in RAG systems

Many GenAI failures are actually data pipeline problems rather than model problems.

11. Prompt Design Errors

Poor prompt design can lead to inaccurate or irrelevant outputs.

Meaning

The model technically works correctly but the prompt does not provide sufficient instruction.

Examples

- Ambiguous prompts

- Missing context

- Conflicting instructions

How to handle it

- Apply prompt engineering techniques

- Use system prompts and role instructions

- Create reusable prompt templates

Prompt engineering is a critical skill when building GenAI applications.

12. Agent Workflow Errors

In multi-agent or tool-using systems, errors may occur in orchestration.

Meaning

The AI agent selects the wrong tool or executes steps in an incorrect sequence.

How to handle it

- Add guardrails and validation checks

- Define tool usage constraints

- Monitor agent decision logs

Agent observability tools are becoming essential for debugging such issues.

Building a Comprehensive Error Handling Strategy

1. Layered Error Handling Architecture

class LLMErrorHandler:

def __init__(self):

self.error_strategies = {

RateLimitError: self.handle_rate_limit,

TokenLimitError: self.handle_token_limit,

ServiceUnavailableError: self.handle_service_unavailable,

ContentPolicyError: self.handle_content_policy,

TimeoutError: self.handle_timeout

}

async def execute_with_handling(self, func, *args, **kwargs):

try:

return await func(*args, **kwargs)

except Exception as e:

strategy = self.error_strategies.get(type(e), self.handle_generic)

return await strategy(e, func, *args, **kwargs)2. Monitoring and Alerting

class ErrorMonitor:

def __init__(self):

self.error_counts = defaultdict(int)

self.error_threshold = 10

def log_error(self, error_type, details):

self.error_counts[error_type] += 1

# Alert if threshold exceeded

if self.error_counts[error_type] > self.error_threshold:

self.send_alert(error_type, self.error_counts[error_type])

# Log to monitoring service

logger.error(f"LLM Error: {error_type}", extra={

'error_type': error_type,

'details': details,

'count': self.error_counts[error_type]

})3. User Experience Considerations

def user_friendly_error_message(error):

"""Convert technical errors to user-friendly messages"""

error_messages = {

RateLimitError: "We're experiencing high demand. Please try again in a moment.",

TokenLimitError: "Your input is too long. Please try shortening it.",

ServiceUnavailableError: "The service is temporarily unavailable. We're working on it.",

ContentPolicyError: "Your request couldn't be processed due to content restrictions.",

TimeoutError: "The request is taking longer than expected. Please try again."

}

return error_messages.get(type(error), "An unexpected error occurred. Please try again.")Best Practices Summary

- Always implement retry logic with exponential backoff for transient errors

- Validate inputs before making API calls to catch errors early

- Use circuit breakers to prevent cascading failures

- Implement proper logging for debugging and monitoring

- Provide meaningful feedback to users without exposing technical details

- Cache responses, when possible, to reduce API calls

- Monitor error patterns to identify systematic issues

- Have fallback strategies for critical functionality

- Test error scenarios regularly in your development process

- Keep error handling code maintainable and well-documented

The Golden Rule: Defensive AI Architecture

When building GenAI applications, you must adopt a philosophy of Defensive Architecture. Do not assume the LLM will always be fast, available, or compliant.

Wrap your LLM calls in robust try-except blocks. Differentiate between errors that are temporary (like a 503 or throughput 429, which should be retried) and errors that are permanent (like a quota exhaustion 429 or a 400 Context Limit, which require application logic changes or user intervention).

By anticipating these failures and building intelligent retries, fallbacks, and content chunking mechanisms, you transition your GenAI app from a fragile prototype to a resilient, production-ready product.

Building Reliable GenAI Systems

To successfully deploy GenAI applications in production, organizations must treat them as AI systems plus distributed software systems. Error handling should include monitoring, logging, retry mechanisms, and evaluation pipelines.

A robust GenAI architecture usually includes:

- Prompt management

- API rate control

- Retrieval systems

- Observability and monitoring

- Evaluation frameworks

- Guardrails and validation layers

By understanding different categories of errors and implementing proper handling mechanisms, teams can build reliable, scalable, and trustworthy AI applications.

As Generative AI adoption grows, error management will become just as important as model performance.

Conclusion

Error handling in LLM/GenAI applications is not just about catching exceptions — it’s about building resilient systems that can gracefully degrade, recover automatically, and provide consistent user experiences even when things go wrong.

By understanding the various error types and implementing comprehensive handling strategies, you can build production-ready GenAI applications that are reliable, scalable, and user-friendly. Remember that error handling is an iterative process; continuously monitor your applications, learn from failure patterns, and refine your strategies accordingly.

The key is to expect failures and design your system to handle them elegantly. With proper error handling in place, your LLM-powered applications can deliver value consistently, even in the face of the inherent complexities and uncertainties of working with cutting-edge AI technologies.

Building robust GenAI applications? Share your error handling experiences and strategies in the comments below. What unique challenges have you encountered, and how did you solve them?

#GenerativeAI #LLM #AIML #AIEngineering #PromptEngineering #AgenticAI #AIArchitecture #AIObservability #MachineLearning #AIProductDevelopment #AgenixAI #AjayVermaBlog

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment