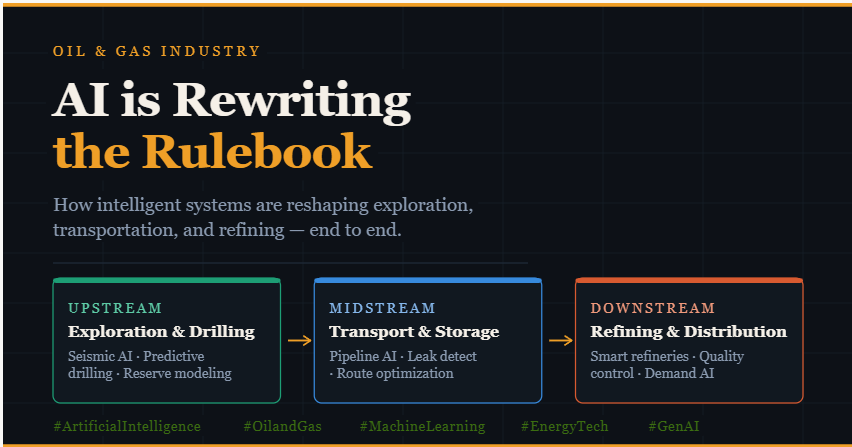

The Digital Rig: How GenAI and Agentic AI are Refining the Oil and Gas Value Chain

The oil and gas industry has always been a high stakes game of data. From the subtle echoes of seismic waves to the complex chemistry of a refinery, the sector generates petabytes of information. However, the true revolution isn’t just in gathering this data, but in how Artificial Intelligence (AI) and Generative AI (GenAI) are now orchestrating it to drive efficiency and safety across the entire production chain.

Here is how the “Digital Oilfield” is evolving from a concept into a global operational standard.

Upstream: Intelligence at the Source

In the exploration and production phase, the goal is simple, but the execution is incredibly difficult: finding and extracting resources with minimal risk.

- Seismic Interpretation: Traditionally, geologists spent months manually interpreting seismic data. Today, AI models can process these datasets in hours, identifying subtle patterns that indicate hidden reserves. GenAI is even being used to create synthetic subsurface models to train these systems where real world data is scarce.

- Precision Drilling: Agentic AI is moving beyond simple monitoring. We now see systems that dynamically adjust drilling parameters, such as mud weight and torque, in real time to prevent blowouts or stuck pipes. This reduces non-productive time (NPT) and significantly increases safety for offshore crews.

- Reservoir Management: AI-driven digital twins simulate fluid flow within a reservoir, predicting how it will react over years of extraction. This allows operators like ExxonMobil and Shell to maximize the recovery rate from every well.

- Predictive Drilling and Real-Time Wellbore Optimization: Once drilling begins, AI models ingest sensor data from drill bits — temperature, torque, vibration, rate of penetration — and use it to recommend real-time adjustments to drilling parameters. This reduces mechanical failures, keeps the wellbore on its planned trajectory, and shortens total drilling time. The concept extends to predicting equipment failures before they happen. Submersible pumps, gas compressors, and wellhead valves all generate operational data. Predictive maintenance models trained on this data can identify the early signatures of wear that precede failure, allowing operators to schedule maintenance before a costly shutdown occurs rather than reacting after one.

- Reservoir Modeling and Production Forecasting: Generative AI and physics-informed neural networks are beginning to complement traditional reservoir simulation. These models can rapidly generate multiple plausible production scenarios given uncertainty in reservoir parameters, helping operators make more informed decisions about well placement, injection strategies, and recovery techniques. What previously required weeks of simulation runtime can now be approximated in hours.

Midstream: The Virtual Pipeline

Once the resources are out of the ground, the challenge shifts to logistics. Midstream operations involve thousands of miles of infrastructure where any failure can have massive environmental and financial costs.

- Predictive Integrity: Instead of “fix when broken,” companies like Kinder Morgan use AI to analyze sensor data from SCADA systems. Anomaly detection algorithms spot tiny pressure drops or temperature shifts that signal a leak or corrosion before a human operator could ever notice them.

- Pipeline Integrity and Leak Detection: Pipeline failures carry enormous financial, regulatory, and environmental consequences. Traditional inspection approaches rely on scheduled physical surveys or periodic in-line inspection tools — an approach that is inherently reactive in nature. AI is enabling a shift toward continuous, real-time integrity monitoring. Pressure sensors, flow meters, and acoustic sensors along pipeline systems feed data into anomaly detection models that can identify the subtle signatures of micro-leaks, corrosion, or unauthorized interference long before they escalate. Some operators have reported detection windows shrinking from hours to minutes.

- Route Optimization and Logistics Coordination: Moving oil and gas from production fields to processing plants involves decisions about which pipelines to prioritize, how to schedule tanker loading at marine terminals, and how to avoid bottlenecks during maintenance windows. These are fundamentally logistics optimization problems, and they are ones AI handles with particular effectiveness. Reinforcement learning algorithms and graph-based optimization models can evaluate thousands of routing permutations simultaneously, factoring in real-time capacity constraints, tariff structures, and demand signals. The result is better utilization of existing infrastructure without adding physical capacity.

- Predictive Maintenance for Compressor Stations: Compressor stations are critical nodes in gas transmission networks. A single unplanned outage can disrupt supply to entire regions. AI-driven monitoring systems for compressor health now analyze vibration patterns, exhaust temperatures, and lubrication pressures to issue early warnings of developing mechanical issues, transforming scheduled outages into planned ones and unplanned ones into rare exceptions.

- Autonomous Monitoring: Drones and satellite imagery, powered by computer vision, now perform constant flyovers of pipeline networks. These AI systems automatically flag encroaching vegetation, unauthorized construction, or early signs of structural fatigue.

- Throughput Optimization: AI agents manage the complex scheduling of tankers and rail cars. By forecasting demand at different hubs, these systems reroute product flows in real time to avoid bottlenecks and minimize “empty miles” in the transport network.

Downstream: Precision at Scale

Downstream is where crude oil becomes a consumer product. In refining and retail, margins are thin, and efficiency is everything.

- Refinery Yield Optimization: Modern refineries are massive chemical computers. AI optimizes the “crack spread” by adjusting heat and pressure in distillation units to ensure the highest yield of high-value products like diesel and jet fuel.

- Predictive Maintenance: Refineries are harsh environments. AI monitors rotating equipment like pumps and compressors to predict failure weeks in advance, allowing for planned maintenance that avoids the million dollar per day cost of an unplanned shutdown.

- Retail Intelligence: At the gas station level, AI is used for dynamic pricing and inventory management. By analyzing local traffic patterns, weather, and competitor prices, retail operators can optimize their supply chain and even personalize loyalty offers for consumers.

- AI-Driven Process Optimization: Modern refineries generate enormous volumes of real-time process data from thousands of sensors across distillation columns, catalytic crackers, hydrotreaters, and blending units. AI models, particularly reinforcement learning systems trained on refinery simulation environments can continuously recommend adjustments to operating variables like temperature, pressure, and flow rates to maximize the yield of high-value products given the current crude input.

- Shell and ExxonMobil have both invested in advanced process control systems that incorporate machine learning layers on top of traditional control architectures, reporting measurable improvements in product yield and energy efficiency.

- Quality Prediction and Blending Optimization: Fuel blending combining streams from different refinery units to create finished gasoline or jet fuel that meets precise specifications has historically relied on laboratory testing cycles that introduce time delays. AI models trained on the relationship between process inputs and final product properties can now predict blend quality in real time, reducing rework, minimizing giveaway (the practice of exceeding specification margins to ensure compliance), and cutting laboratory costs.

- Demand Forecasting and Supply Chain AI: Downstream also encompasses the distribution and retail side of the business. AI models that integrate weather forecasts, regional economic indicators, driving pattern data, and historical demand are now being used by fuel distributors to optimize inventory positioning at terminals and ensure that retail forecourts do not run dry during demand spikes. This reduces the cost of emergency resupply while improving customer availability.

- GenAI in Refinery Operations: Large language models are beginning to find a role in downstream operations as well not as autonomous decision-makers, but as intelligent assistants for field technicians and control room operators. Engineers can query a model trained on refinery documentation, historical incident reports, and equipment manuals to get rapid answers during non-routine situations, reducing the time it takes to diagnose abnormal operating conditions.

The Human Architect in the AI Loop

While GenAI can draft a system architecture or a flow diagram for these operations, it still lacks the “contextual wisdom” that engineers provide. AI cannot yet reason about the complex regulatory landscape or the legacy debt of a forty-year-old refinery.

The future of energy is not a world without humans, but one where senior engineers act as architects, using AI to manage the “latency tax” of operations and the “total cost of autonomous resolution.”

The Challenges That Remain

The application of AI across oil and gas is not without friction. Data quality is a persistent issue — legacy infrastructure generates inconsistent or poorly labeled data that limits model performance. Operational technology environments often have strict cybersecurity constraints that complicate the deployment of cloud-based AI systems. And the industry workforce, deeply experienced in traditional methods, requires thoughtful change management to embrace AI-assisted ways of working.

There are also valid concerns about over-reliance on black-box models in safety-critical environments. The industry is investing in explainable AI approaches and human-in-the-loop system designs that keep qualified engineers in the decision chain for high-consequence actions.

Conclusion

AI is not a single technology arriving at a fixed moment. It is a wave of capabilities — machine learning, computer vision, reinforcement learning, large language models, digital twins — reaching different parts of the oil and gas industry at different rates and with different implications.

What is clear is that the companies investing in building the data infrastructure, technical talent, and organizational culture to support AI adoption are building a durable competitive advantage. In an industry where a percentage point improvement in refinery yield or a week’s reduction in drilling time can translate to tens of millions of dollars, the return on that investment is very real.

The oil and gas industry has always bet on engineering to solve problems at the edge of human capability. AI is simply the next frontier of that bet.

#OilAndGas #AI #GenerativeAI #EnergyIndustry #Upstream #Midstream #Downstream #DataScience #Innovation #PredictiveMaintenance #DigitalOilfield #EnergyTech #DigitalTransformation #PredictiveAnalytics #UpstreamOil #Midstream #Downstream #IndustryIntelligence #AgenixAI #AjayVermaBlog

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment