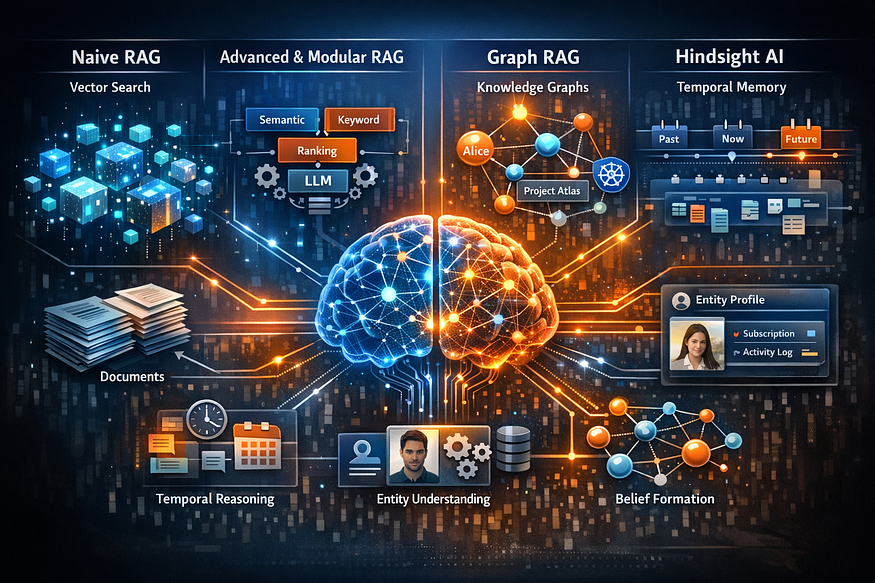

Beyond Naive RAG: From Vector Soup to Hindsight Memory & Temporal Reasoning

If you built a Retrieval Augmented Generation (RAG) pipeline six months ago, I have bad news for you: It’s likely already legacy tech.

We used to think that chunking documents and shoving them into a vector database was the silver bullet for LLM knowledge. We were wrong. Researchers now call this “Naive RAG,” and in production, it is where accuracy goes to die.

The industry is moving fast. We have graduated from simple retrieval to Advanced, Modular, Graph-based, and now, Hindsight architectures. Here is your roadmap to navigating this evolution and preventing your enterprise LLM from hallucinating on its own data.

Naive RAG challenges

- Tabular data after splitting: When tables are flattened into text and naively chunked, row–column relationships are lost, so queries like “total revenue by region in 2024” may retrieve only partial rows or mismatched headers.

- Numbers, product codes, IDs: Dense embeddings are great for semantics but can be poor at exact lookup for identifiers or small numeric differences, so SKU “X120-B” may not rank above “X120-A” even if only one is relevant.

- Multi-hop reasoning: Context for each hop may sit in different chunks; raw top-k similarity often retrieves facts related to only one hop.

- Long documents: Important context is split across many chunks, and top-k may miss one crucial piece, causing subtle hallucinations.

- Evaluation blind spots: Teams often rely only on user anecdotes instead of systematic RAG evaluation, so regressions go undetected.

Naive RAG is a great proof-of-concept, but it is brittle as soon as correctness, coverage, or auditability matter.

From Naive RAG to production-grade RAG

Retrieval-Augmented Generation (RAG) combines external retrieval with LLM generation so models can answer questions over private or frequently changing data without retraining. At its core, a RAG loop embeds a query, retrieves relevant context, and lets the LLM ground its answer in that context instead of hallucinating.

In practice, this “simple” pattern hides many sharp edges: tabular data, IDs and codes, multi-hop reasoning, temporal questions, and evaluation all expose the limits of naive pipelines. As usage scales, teams gradually move through distinct maturity stages: Naive, Advanced, Modular, Graph-aware, and finally structured-memory systems like Hindsight.

Stage 1: The Trap of Naive RAG

Naive RAG follows a linear “Retrieve-Read” framework. You chop a PDF into 512-token chunks, embed them, and retrieve the top 3 matches based on vector similarity.

Why it fails in the Enterprise:

- The Table Problem: Naive chunking destroys semantic structure. If a financial table is split across two chunks, the LLM loses the header context. It sees a number like “$500M” but doesn’t know if that refers to Revenue or Debt.

- The Specificity Trap: Vector databases are great at concepts (e.g., “Fruit” ≈ “Apple”) but terrible at exact matches (e.g., “Error Code 505”). To a vector DB, “Error 505” and “Error 404” are mathematically close, but in engineering, they are worlds apart.

- Lost in the Middle: If you retrieve too much context, LLMs tend to ignore information buried in the middle of the prompt window.

Stage 2: Advanced RAG (Fixing the Pipeline)

To fix Naive RAG, we don’t just upgrade the model; we fix the data pipeline before and after retrieval.

- Pre-Retrieval (Query Expansion): Users are bad at prompting. Advanced RAG uses an LLM to rewrite a vague query like “How’s the plan working?” into precise search terms like “Project Atlas Q3 performance metrics.”

- Post-Retrieval (The Reranker): Vector search is fast but “messy.” In Advanced RAG, we retrieve 50 documents and pass them through a Cross-Encoder Reranker. This model acts as a harsh judge, scoring documents on actual relevance and discarding the noise before the LLM ever sees them.

Stage 3: Modular RAG (The Agentic Shift)

RAG is no longer a straight line; it’s a loop. Modular RAG treats the system like a toolbox of microservices.

- The Router: Not every query needs a vector search. A routing module analyzes the request:

- Current events? → Route to Web Search.

- Company Policy? → Route to Vector DB.

- Math? → Route to Python Interpreter.

- Self-Correction (The Loop): If the retrieved data doesn’t answer the question, the system doesn’t just hallucinate. It triggers a feedback loop, re-writing the query and searching again. It’s essentially a “backspace” button for AI.

Stage 4: Graph RAG (Connecting the Dots)

Vector databases struggle with Multi-Hop Reasoning.

- Fact A: Alice works on Project Atlas.

- Fact B: Project Atlas uses Kubernetes.

- Fact C: Kubernetes had an outage on Tuesday.

If you ask, “Was Alice affected by the outage?”, a vector search for “Alice” won’t find “Kubernetes outage.”

Graph RAG solves this by constructing a Knowledge Graph. It traverses the edges (relationships) between entities. It sees the path: Alice → Project Atlas → Kubernetes → Outage, allowing the LLM to deduce the impact even without direct keyword overlap.

Stage 5: Hindsight (The Temporal & Psychological Layer)

This is the bleeding edge. While Graph RAG handles relationships, Hindsight handles Time and Beliefs.

Traditional RAG is “time-blind.” If you ingest data from 2021 and 2024, the vector DB treats them as equal. Hindsight introduces Temporal Reasoning and Entity Understanding.

How Hindsight differs from RAG:

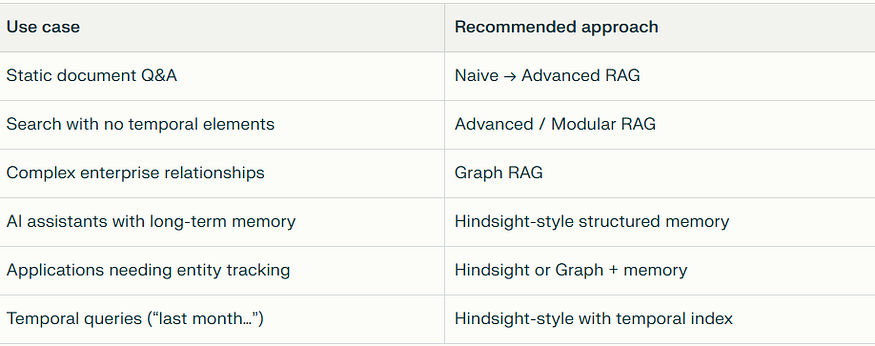

For most teams, the journey is incremental: Naive → Advanced → Modular → Graph or Hindsight for the hardest reasoning and memory problems.

Temporal Parsing:

- Query: “What did Alice do last spring?”

- RAG: Returns random facts about Alice.

- Hindsight: Parses “last spring” into a specific date range (March–May), filters the retrieval, and ignores irrelevant data from October.

Parallel Retrieval Fusion:

Instead of one search, Hindsight executes four in parallel: Semantic (Concept), BM25 (Exact Keyword), Graph (Relationships), and Temporal (Time). It then fuses these results using Reciprocal Rank Fusion (RRF) for a holistic view.

Belief Evolution:

Hindsight tracks the state of an entity over time.

- Week 1: User struggles with Python.

- Week 4: User asks complex AsyncIO questions.

- System Conclusion: Hindsight updates the user profile from “Novice” to “Intermediate,” adjusting future answers to be more technical. Standard RAG would treat every interaction as Day 1.

Search types in Hindsight-style systems

- Semantic: capture paraphrasing and conceptual similarity (“microservices refactor” ≈ “service decomposition”).

- Syntactic / keyword (BM25): handle exact terms, IDs, proper names, and error messages.

- Graph: traverse related entities and dependencies to answer multi-hop questions.

- Temporal: interpret expressions like “last month,” “in Q1,” “since 2023” as concrete ranges.

Concrete scenarios

Multi-hop reasoning- Stored facts:

- “Alice is the tech lead on Project Atlas”

- “Project Atlas uses Kubernetes”

- “Kubernetes cluster had an outage Tuesday”

Query: “Was Alice affected by recent issues?”

- Traditional RAG: tends to retrieve only Alice-related text; “issues” may not match “outage” strongly enough.

- Hindsight-style: uses graph retrieval to chain Alice → Project Atlas → Kubernetes → outage and infer that Alice was likely impacted.

Temporal queries- Facts with timestamps:

- March: “Alice started microservices migration”

- April: “Alice completed auth service”

- October: “Alice focusing on performance”

Query: “What did Alice do last spring?”

- Traditional RAG: returns all Alice facts regardless of date.

- Hindsight-style: parses “last spring” → March–May, filters events to that range, and answers precisely.

Entity understanding and synthesis- Facts about a user across sessions:

- “Pro subscription”

- “Mobile app crashes in settings”

- “Switched to annual billing”

- “Desktop app working fine”

Query: “What do you know about my account?”

- Traditional RAG: surfaces a bag of disconnected snippets.

- Hindsight-style: aggregates into a structured view (subscription status, billing model, known issues, platforms) and answers at the entity level.

Belief evolution over time- Over multiple weeks:

- Week 1: user struggles with async Python, prefers threads.

- Week 3: user asks about asyncio and implements async DB calls.

- Traditional RAG: treats each interaction separately, with no explicit notion of progression.

- Hindsight-style: updates an internal belief like “user prefers sync” → “user becoming comfortable with async,” affecting future suggestions and explanations.

Hindsight-style systems are particularly strong for AI assistants with persistent memory, entity tracking, and temporal reasoning needs.

The Strategy: RAG vs. Fine-Tuning

A common question remains: Should I use RAG or Fine-Tune?

Think of it like a student taking an exam:

- RAG is an Open-Book Exam: The student (LLM) creates answers by looking up specific pages in a textbook. It is perfect for facts that change often (e.g., Stock prices, Jira tickets).

- Fine-Tuning is Studying: The student internalizes the information. They learn the methodology, the jargon, and the style.

- Use RAG when knowledge changes frequently

- Use Fine-Tuning when behavior/style must change

- Use Hindsight when memory, time, and beliefs matter

Most production systems need RAG + agents + memory, not just bigger models.

The Winning Move: Don’t fine-tune the LLM on facts; fine-tune the Embedder and Retriever to understand your industry jargon, then use RAG (or Hindsight) to access the facts.

Measuring success: the RAG Triad and evaluation

Without measurement, RAG optimization is guesswork. The RAG Triad focuses on three dimensions:

You cannot improve what you cannot measure. To evaluate your pipeline, use the RAG Triad (frameworks like RAGAS automate this):

- Retrieval quality: Are retrieved passages relevant and complete?

- Generation quality: Is the answer correct, grounded, and well-structured?

- User impact: Do users find it useful (CSAT, task success, time-to-answer)?

- Context Relevance: Did the retrieval step find useful stuff, or just noise

- Groundedness: Is the answer supported by the retrieved documents, or did the AI hallucinate?

- Answer Relevance: Did the AI actually answer the user’s specific question

Common RAG evaluation patterns include:

- Synthetic Q&A generation from your corpus for offline scoring.

- Judging retrieval precision/recall with small human-labeled sets.

- Faithfulness metrics that detect hallucinations vs retrieved context.

- Live A/B tests across chunking and retrieval variants.

Robust evaluation is what turns RAG from experimentation into a reliable platform primitive.

Final Takeaway

RAG has evolved from retrieval to reasoning, and now to memory-driven intelligence.

Naive RAG answers questions.

Graph RAG reasons over knowledge.

Hindsight understands context, time, and users.

If you’re still treating RAG as “vector search + LLM”, you’re already behind.

Conclusion

If you are relying on Naive RAG for enterprise applications, you are building on a shaky foundation. For static knowledge bases, Advanced RAG is the minimum standard. For complex data with relationships, look to Graph RAG. And if your application requires memory, user history, or time-sensitivity, Hindsight architecture is the future.

#RAG #GraphRAG #HindsightAI #LLM #GenAI #AgenticAI #AIArchitecture #KnowledgeGraphs #EnterpriseAI #MLOps #AIMemory #AdvancedRAG #MachineLearning #AIEngineering #AIML

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment