Beyond the Goldfish Brain: Why Memory is the Secret Sauce for AI Agents

Imagine talking to a brilliant assistant who has read every book on Earth but forgets who you are the moment you leave the room. Every time you return, you have to re-explain your preferences, your job, and your past projects.

This is the current reality of Large Language Models (LLMs). Despite their vast knowledge, they are born with a “goldfish brain.” To transform these models into true AI Agents, we need to give them a way to remember.

Why Memory Matters in GenAI

Memory isn’t just a nice-to-have feature for AI agents — it’s the bridge between impressive demos and truly intelligent systems. Without memory, an AI agent is like a savant with amnesia: capable of extraordinary reasoning but unable to learn from experience or build on past interactions.

The difference between a stateless chatbot and a memory-enabled agent is the difference between a helpful stranger and a trusted colleague. One answers your questions competently; the other understands your context, anticipates your needs, and grows more valuable over time.

Stateless vs Stateful: The Architectural Choice

The shift from stateless to stateful agents represents a fundamental architectural evolution.

Stateless agents (Pure LLM) are simpler, more predictable, and easier to scale horizontally. Each request is independent. You can load-balance across servers without worrying about session affinity.

- No history

- No learning

- Predictable but limited

Stateful agents (Agent + Memory) are more complex but also more capable. They maintain internal state that evolves over time. They learn, adapt, and improve with use.

- Persistent identity

- Personalization

- Long-term planning

- Adaptive behavior

The future isn’t about choosing one over the other — it’s about knowing when each approach is appropriate and building systems that can operate in both modes depending on the use case.

How LLMs Work at Inference: Stateless by Design

At their core, LLMs are stateless prediction engines. When you send a prompt to GPT-4, Claude, or any other model, here’s what happens:

- Your prompt is tokenized and encoded

- The model processes it through billions of parameters

- It generates a response token by token

- The conversation ends

There’s no “save state” button. No internal database of past interactions. Each API call is completely independent. The model doesn’t “remember” you from yesterday, or even from five minutes ago.

This stateless design is actually elegant from an engineering perspective — it makes models scalable, parallelizable, and predictable. But it creates a significant gap between what these models can do and what intelligent agents need to do.

The Core Problem: LLMs Have No Memory

The challenge runs deeper than just forgetting conversations. Without memory, LLMs cannot:

- Personalize responses based on user preferences or history

- Learn from feedback or mistakes over time

- Maintain consistency across different sessions

- Build relationships that deepen with repeated interactions

- Prioritize what’s important versus what’s trivial

- Reference past decisions or reasoning chains

This isn’t a bug — it’s a fundamental architectural characteristic. But it’s also the bottleneck preventing AI agents from becoming truly useful long-term partners.

Building Memory Around LLMs

Since LLMs themselves don’t have memory, we need to build memory systems around them. This is where the architecture gets interesting.

Context Window and In-Context Learning

The first and most obvious solution is the context window — the amount of text an LLM can process in a single request. Modern models like GPT-4 Turbo can handle 128K tokens, and Claude can process up to 200K tokens.

In-context learning leverages this by including relevant information directly in the prompt. You can stuff conversation history, user preferences, relevant documents, and examples all into that context window, and the model will use it to generate informed responses.

This works remarkably well for immediate tasks. If you’re summarizing a long document or answering questions about a specific dataset, a large context window is often sufficient.

But here’s the catch: context windows are not memory.

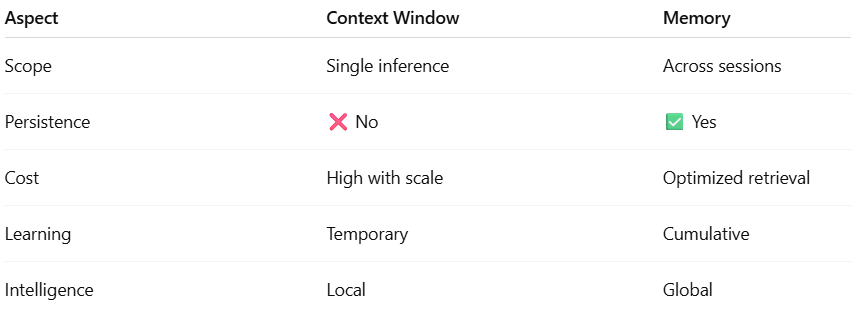

LLM Context Window vs Memory: A Critical Distinction

A common misconception — and one that persists despite evidence to the contrary — is that large context windows will eliminate the need for memory systems.

“Why build complex memory architecture when we can just stuff everything into a 200K token context window?”

The answer comes down to three fundamental limitations:

Cost: Tokens aren’t free. Processing 100K tokens per request adds up quickly, especially for high-volume applications. Memory systems retrieve only relevant information, reducing token count by orders of magnitude.

Latency: Larger contexts take longer to process. Users expect sub-second responses, but processing massive context windows can take several seconds or more.

Effectiveness: Research shows LLMs struggle with information retrieval from long contexts, especially the “lost in the middle” phenomenon. A focused context with selectively retrieved memories often outperforms a bloated context with everything.

Context windows help agents stay consistent within a session. Memory allows agents to be intelligent across sessions.

The Starting Point: LLMs are Stateless by Design

At its core, an LLM at the time of inference is just a highly complex mathematical function: y = fθ(x)

- x is your prompt (input tokens).

θrepresents the billions of parameters learned during training.yis the AI’s response.

Because this is a math function, it is stateless. It doesn’t “change” after it answers you. If you ask the same question twice in two different sessions, the model has no intrinsic “memory” that you just asked it that 30 seconds ago.

Short-Term Memory: The “Note-Passing” Trick

To make AI feel like it remembers a conversation, developers use Short-Term Memory (STM).

How it works:

Instead of just sending your latest question (x2) to the AI, the system “cheats.” it grabs the previous question (x1) and the AI’s previous answer (y1), stitches them together, and sends the whole history as a new, giant prompt.

The Limitation: The Context Window

This history lives in the Context Window. While modern models have windows reaching 100K or even 1M tokens (enough for several books), this approach has three major flaws:

- Fragility: This memory is “Thread-Scoped.” If you close the chat and start a new “Thread,” the memory is wiped clean. The AI becomes a stranger again.

- Cost and Latency: Every additional token costs money and time. A 50-message conversation with context windows could easily hit thousands of tokens per request, making it expensive and slow.

- Context Dilution: As conversations grow longer, important information gets buried. LLMs suffer from the “lost in the middle” problem — they’re better at using information at the beginning and end of context than in the middle.

- No Cross-Session Persistence: The moment the session ends, everything disappears. Tomorrow’s conversation starts from zero again.

- No Prioritization: Every message gets equal treatment. Your name has the same weight as a random comment about the weather.

- Scalability Issues: You can’t maintain conversation history for millions of users across months of interactions — you’d need to store and process impossibly large contexts.

The Three Pillars of Agentic Memory

True intelligence requires more than just re-reading a chat log. It requires a persistent internal state built on three pillars:

The most sophisticated memory systems rest on three pillars:

- State: Knowing what’s happening right now — the current conversation, active tasks, working variables.

- Persistence: Retaining knowledge across sessions, days, or even months. This is what transforms an agent from a tool into a partner.

- Selection: Deciding what’s worth remembering and when to recall it. Not everything deserves permanent storage, and not every memory is relevant to every conversation.

These three pillars work together to create agents that feel intelligent, contextual, and genuinely helpful over time.

Why We Need Long-Term Memory

True intelligence requires long-term memory — the ability to retain, recall, and reason about information across sessions and over extended periods.

Think about what this enables:

- An AI assistant that remembers you prefer concise responses with technical details

- A coding agent that recalls the architecture decisions from your last project

- A customer service bot that knows your purchase history and previous issues

- An educational tutor that tracks what concepts you’ve mastered and where you struggle

Long-term memory transforms agents from tools you use into partners you work with.

Long-Term Memory (LTM): The Real Game Changer

To solve the “Stranger Problem,” we implement Long-Term Memory. This is memory that survives beyond a single conversation. In AI architecture, we categorize LTM into three types:

Episodic Memory (What happened): Specific events and interactions, recalling specific past events.

- The user asked about Python debugging on March 15th.

- Last week, the deployment failed because of a missing credential.

- We discussed migrating from PostgreSQL to MongoDB last month.

- Captures the what, when, and context of past interactions

Semantic Memory (What is true): General knowledge and learned facts about the user or the world.

- The user is a backend developer who prefers TypeScript.

- The user prefers Python over Java.

- They’re working on a healthcare SaaS product.

- They prefer detailed explanations over quick summaries.

- Extracts persistent truths from episodic experiences.

Procedural Memory (How to do things): Learned patterns and processes-Rules or workflows learned over time.

- When this user asks for code, include error handling by default.

- When solving SQL problems for this user, always use window functions instead of subqueries.

- Always provide deployment commands after sharing backend code.

- Captures how to interact effectively with this specific user.

How a Long-Term Memory System Operates

Building a “Stateful” AI involves a 4-step workflow that wraps around the LLM:

Step 1: Creation/Update (Memory Encoding): Not everything deserves to be remembered. The system needs to extract salient information from conversations and encode it into structured memory entries. The system analyzes the chat and asks, “Is there anything here worth saving forever?” It extracts “memory candidates” and filters out noise.

This might involve:

- Summarizing long conversations into key points

- Identifying user preferences and facts

- Extracting entities, relationships, and context

- Tagging memories with metadata (timestamp, topic, importance)

Step 2: Storage: You need a database to persist information across sessions. Vector databases like Pinecone, Weaviate, or Chroma are popular choices because they enable semantic search — finding relevant memories based on meaning, not just keywords. The extracted facts are saved in a durable store (like a Vector Database or a Key-Value store).

Step 3: Retrieval: When a new conversation begins, the system needs to retrieve relevant memories and inject them into context. This is where semantic search shines — you can find memories related to the current topic even if the exact words don’t match. When you ask a new question, the system searches the store for relevant past memories.

Step 4: Injection: The system “injects” only the relevant memories back into the LLM’s short-term context.

Memory Management Over time, memories need to be:

- Updated when information changes

- Consolidated to reduce redundancy

- Prioritized by importance and recency

- Archived or deleted when no longer relevant

Memory Integration Retrieved memories must be intelligently woven into the prompt in a way that’s natural and effective, without overwhelming the context window.

Designing Short‑Term vs Long‑Term Memory

A practical rule of thumb for agents:

- Short‑term memory = what the AI remembers now

- Last turns of the chat, current workflow state, active variables.

- Implemented as: rolling window of messages + in‑prompt working state.

- Long‑term memory = what the AI learns and recalls later

- Summaries of past conversations, stable preferences, historical tasks and outcomes.

- Implemented as: persistent stores (vector DBs, key‑value stores, SQL, graphs) plus retrieval policies.

Short‑term memory keeps the agent coherent within a session.

Long‑term memory allows it to learn, personalize, and adapt across sessions, teams, and time.

Memory Is Not RAG (But They Work Well Together)

It’s worth explicitly addressing another common confusion: memory systems are not the same as Retrieval-Augmented Generation (RAG).

RAG retrieves external knowledge (usually from documents) to ground responses in facts:

- “What does our company policy say about remote work?”

- “Summarize the findings from this research paper”

Memory retrieves knowledge about the user and past interactions:

- “What programming languages does this user prefer?”

- “What problem were we solving in our last conversation?”

RAG is fundamentally stateless — it has no awareness of who you are or what you’ve discussed before.

Memory is inherently stateful — it’s all about persistent, personalized context.

That said, they’re complementary. An advanced agent might use both:

- RAG to retrieve relevant documentation

- Memory to understand the user’s skill level, preferences, and history with the topic

Challenges and Tools for Memory Systems

Building production-grade memory systems is nontrivial. Some key challenges include:

Privacy and Security: User memories contain sensitive information. How do you protect it? How do you handle data retention policies and right-to-deletion requests?

Accuracy and Hallucination: Memory systems can create false memories or misremember details. How do you ensure reliability?

Consistency: As memories accumulate, they may contradict each other. How do you resolve conflicts?

Performance: Retrieving and processing memories adds latency. How do you keep responses snappy?

Cost: Vector databases and additional LLM calls for memory encoding aren’t free. How do you optimize costs at scale?

Fortunately, several tools and frameworks are emerging to help:

- LangChain and LlamaIndex: Provide memory abstractions and integrations

- MemGPT: Specialized memory management for LLM agents

- Mem0: Purpose-built memory layer for AI applications

- Zep: Fast, scalable memory store for conversational AI

- LangMem & SuperMemory: Build external systems

Tools and Patterns for Memory in GenAI

Although specifics evolve rapidly, most production systems rely on a few emerging patterns:

Vector stores for semantic & episodic memory

- Example: storing conversation chunks, task summaries, decisions with embeddings and metadata.

Relational / key‑value stores for structured semantic memory

- Example: user preferences table, “known projects,” feature flags.

Knowledge graphs for long‑lived, relational facts

- Example: entities (users, projects, documents, APIs) and edges (“uses,” “owns,” “depends on”).

Memory‑aware orchestrators and agents

- Agent frameworks (LangGraph‑style, custom state machines) that treat memory as a first‑class component: read, write, and route based on memory at every step.

Summarization pipelines

- Scheduled jobs that compress raw logs into higher‑level summaries, keeping memory both compact and meaningful over time.

The Future of Memory in LLMs

As the field matures, I believe we’ll see memory systems become as fundamental to AI agents as databases are to traditional applications.

Some exciting directions:

Hierarchical Memory: Multiple memory layers operating at different timescales — working memory for the current task, episodic memory for recent sessions, semantic memory for long-term knowledge.

Shared Memory: Teams of agents that can access and contribute to collective memory pools.

Memory Graphs: Memories stored not just as isolated facts but as interconnected knowledge graphs that capture relationships and context.

Forgetting Mechanisms: Just as humans forget irrelevant information, agents may need mechanisms to gracefully age out or compress old memories.

Autobiographical Memory: Agents that can reflect on their own history and development over time.

Google’s recent Titans + MIRAS research explores new transformer architectures where the model can “learn” and “recall” within its own neural weights while it’s running, potentially eliminating the need for massive external databases.

Research is moving toward “Intrinsic Memory.”

The frontier is moving from “LLMs with a database” to truly memory‑centric agents:

- Learned memory controllers that decide what to store, where, and when to retrieve — trained on interaction data.

- Hybrid architectures where some memory is parameterized (fine‑tuning, adapters) and some is external (databases, graphs).

- Cross‑agent memory sharing within organizations, where multiple agents collaboratively evolve a shared workspace memory while respecting permissions.

- Richer cognitive models: attention, forgetting, and consolidation that more closely mirror human memory dynamics rather than flat logs and one‑off summaries.

As context windows keep growing, they will remain powerful — but they won’t replace memory. True GenAI/agent intelligence will come from systems that treat memory as a core architectural primitive, not just a bigger prompt.

Closing Thoughts

We’re still in the early days of memory-enabled AI agents. The techniques are evolving rapidly, the tools are maturing, and best practices are still being discovered.

But one thing is clear: memory is the missing piece that transforms impressive language models into truly intelligent agents. It’s the difference between a calculator and a colleague, between a search engine and a mentor.

As we build the next generation of AI systems, memory won’t be an optional feature — it’ll be a foundational requirement. The agents that win will be those that remember, learn, and grow alongside the humans they serve.

The future of AI isn’t just about bigger models or longer context windows. It’s about building systems that remember who we are, what we care about, and how to help us better over time.

And that future is being built right now.

Conclusion

Memory is what turns a chatbot into a partner. By moving from stateless “goldfish” models to stateful, persistent agents, we move closer to AI that doesn’t just process data, but actually understands the context of our lives and work.

Rule of thumb:

- Short-Term Memory = What the AI remembers now to keep the conversation coherent.

- Long-Term Memory = What the AI learns and recalls later to make the relationship personal.

Short-Term (Working Memory)

- Conversation history

- Temporary variables

- Current focus & attention

Long-Term Memory

- Preferences

- Learned behaviors

- Past experiences

#GenAI #AgenticAI #LLMMemory #AIArchitecture #AIAgents #ArtificialIntelligence #RAG #LongTermMemory #ContextWindow #FutureOfAI

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment