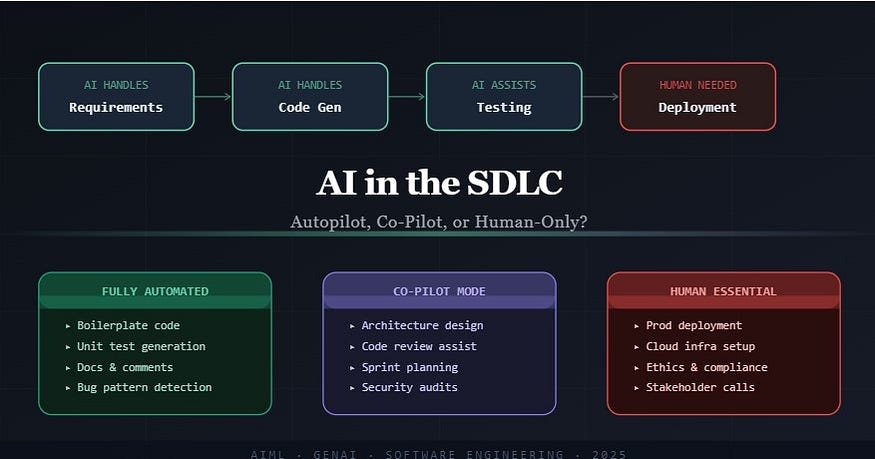

AI in the SDLC: Autopilot, Co-Pilot, or Human-Only?

AI in the SDLC: Autopilot, Co-Pilot, or Human-Only?

The software industry is going through its most significant productivity shift since the move to cloud-native architecture. Generative AI is no longer a novelty sitting at the edges of the development workflow — it is sitting at the center of it. But there’s a real gap between what AI can do, what it should do with human oversight, and what still genuinely requires a seasoned engineer making judgment calls.

Let’s cut through the hype and be specific.

Where AI Has Taken the Wheel

Requirements and user story generation used to mean hours of meetings, transcription, and document formatting. Tools powered by large language models can now parse raw business input, a Slack conversation, or even a voice recording and produce structured user stories, acceptance criteria, and edge case lists in minutes. The quality is not perfect, but it is often a strong 80% that a product manager refines rather than writes from scratch.

Code generation is where the transformation is most visible. GitHub Copilot, Cursor, and similar tools have shifted the baseline of what a single developer can produce in a day. Boilerplate CRUD operations, API endpoint scaffolding, data transfer object definitions, configuration files is now largely auto-generated. More impressively, AI can translate natural language prompts into working logic for well-defined problems, unit tests for a given function, and even suggest refactors for readability.

Documentation and code comments were historically the last thing developers wrote and the first thing to become outdated. AI tools tied to the codebase generate inline documentation in real time, keeping it synchronized with code changes in a way that manual effort rarely achieves.

Static code analysis and bug pattern detection benefit from models trained on billions of lines of code. Patterns that lead to null pointer exceptions, SQL injection vulnerabilities, memory leaks, or race conditions are now flagged before a pull request is even opened.

Where AI Works Best Alongside a Human

System architecture and design decisions still require a human at the helm, but AI has become an invaluable thinking partner here. When a senior architect is evaluating microservices versus a modular monolith for a given scale requirement, they can now stress-test their thinking against an AI that has absorbed thousands of architecture case studies. The decision remains human, but the ideation phase is richer and faster.

Code review is another hybrid zone. AI-assisted PR review tools can flag style inconsistencies, highlight logic gaps, and even estimate complexity but they miss context. They do not know that this particular module is maintained by one engineer who will be on leave during the next release, or that this API contract has an implicit upstream dependency that is not documented anywhere. Human reviewers bring that institutional knowledge.

Sprint planning and backlog refinement have seen AI tools enter as estimation assistants. Given historical velocity data and the shape of a new ticket, AI can suggest story point estimates and flag tickets that historically lead to scope creep. Product teams use this as an input, not a verdict.

Security auditing is a strong co-pilot use case. Automated scanning tools catch known vulnerability signatures well. However, business logic vulnerabilities, the kind where a legitimate API call is misused across account boundaries, require a human security engineer who understands the product flow, not just the code structure.

Database design and query optimization can benefit enormously from AI suggestions, but the final schema decisions involve trade-offs around access patterns, future product direction, and infrastructure costs that a model cannot evaluate without full business context.

Where Humans Remain Non-Negotiable

Production deployments are the sharpest example. Every organization that has operated infrastructure at scale knows that the gap between staging and production is not just a technical gap, it is a risk management problem. Production carries live user data, contractual SLA obligations, regulatory requirements, and a blast radius that automated systems cannot fully model. A human making the final deployment call, watching real-time dashboards, and staying on call during a release window is not a legacy practice, it is responsible engineering.

Cloud infrastructure setup and architecture provisioning remain deeply human-intensive. Yes, infrastructure-as-code tools and AI-assisted Terraform generation exist. But designing the initial network topology, choosing availability zone strategies, setting up IAM policies that are least-privilege without breaking legitimate service communication, and deciding which managed services to use versus self-host, these decisions require architectural judgment, cost modeling, and organizational context that AI tools cannot reliably provide.

Compliance, data governance, and regulatory sign-off are legally accountable processes. When a system handles GDPR-regulated personal data or falls under HIPAA, ISO 27001, or PCI-DSS, someone’s name is attached to that certification. An AI cannot be named in a compliance report. The engineer and the organization carry the liability.

Stakeholder communication and change management when a major refactor will affect a downstream team, or when an engineering trade-off needs to be explained to a non-technical executive who controls the budget, these require human communication, empathy, and relationship dynamics that no current model navigates reliably.

Incident response and root cause analysis during a production outage are extremely high-stakes, time-sensitive situations where experienced engineers read noisy, incomplete signals and make judgment calls. AI tools can surface relevant logs and suggest hypotheses, but the engineer who knows the system’s history, has seen this failure mode before, and knows which team to call at 2 AM is irreplaceable.

Ethical reviews and AI model governance somewhat ironically, require the most careful human attention. Decisions about what data a model is trained on, whether a feature powered by AI could introduce bias against a protected class, and how to communicate model limitations to users are not engineering problems. They are organizational and moral responsibility problems.

A Useful Mental Model

Think of the SDLC in three zones:

Automation zone: repetitive, well-defined tasks with clear success criteria. AI owns this. Code scaffolding, unit test generation, documentation, dependency analysis.

Augmentation zone: complex tasks where AI dramatically raises the quality and speed of human output but the human provides context, judgment, and accountability. Architecture reviews, sprint planning, security audits, PR feedback.

Human critical zone: tasks where the stakes, ambiguity, accountability, or interpersonal dimension cannot be delegated. Production go-lives, infrastructure design, compliance, incident response, and any decision that affects real people and carries legal or ethical weight.

What This Means for Engineering Teams

The teams getting the most out of AI are not the ones that handed it the most tasks. They are the ones who were deliberate about which tasks to hand over. They identified their highest-friction, lowest-judgment work, automated it aggressively, and redirected engineer attention toward architecture quality, system reliability, and product thinking — the work that compounds.

The engineers who will thrive are not those who resist AI tools, nor those who blindly trust them. They are the ones who understand the boundary between where AI is a reliable collaborator and where human expertise is the actual product being delivered.

That boundary is not fixed. It will shift. But understanding where it sits today is one of the most practical things an engineering team can do in 2026.

#ArtificialIntelligence #GenerativeAI #SoftwareDevelopment #SDLC #AIinTech #CodingWithAI #DevOps #SoftwareEngineering #GitHubCopilot #AIDevelopment #CloudComputing #TechLeadership #AgileAI #MLEngineering #FutureOfWork

If you like this article and want to show some love:

- Visit my blogs

- Follow me on Medium and subscribe for free to catch my latest posts.

- Let’s connect on LinkedIn / Ajay Verma

Comments

Post a Comment